3 Simple Opportunities to Improve Your Thinking with AI

Before, During, and After the Prompt

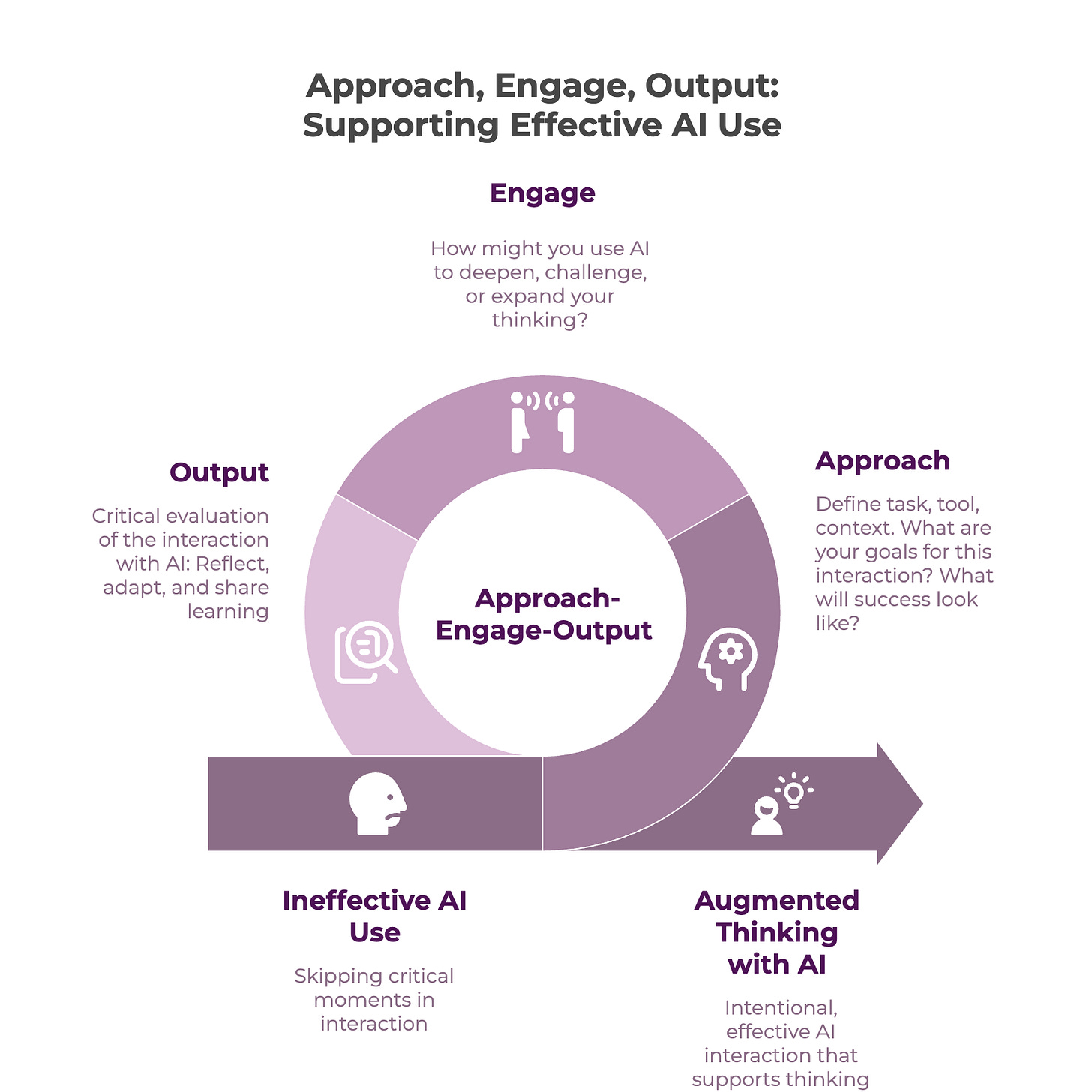

TL;DR: The quality of what you get from AI may have less to do with your prompts than with choices you’re making (or not making) before and after the chat window. We’ve been experimenting with a simple three-part framework — approach, engage, output — and it’s changed how we think about AI as a tool that makes us better thinkers, not just more productive.

Have you ever opened an AI tool, typed something in, gotten something back, used it (or not), and closed the tab? Of course you have! We all have. We’re all pressed for time, and in those moments where the AI ‘easy-button’ is tempting, it’s effortless to fall into that cycle. You prompted, it responded, you moved on.

But recent studies (such as this one from MIT, a response from Harvard, and this sponsored Atlantic piece from Google) are showing the many potential cognitive risks and downfalls of superficial or over-reliant ways of using AI and what we should be doing instead. There are plenty of headlines about AI making us “dumber” and many are asking how they can prevent this from happening to themselves or their colleagues. In our experimentation, we’ve been wondering how we might engage with AI in ways that support rather than undermine our thinking.

We’ve both been using AI regularly in our consulting practices for well over two years now. Kelci mostly lives in ChatGPT; Valerie mostly works in Claude and occasionally Gemini. We use different tools, have different workflows, and gravitate toward different features. But, as we started comparing notes (which we do often, because experimenting with AI alongside someone else is genuinely one of the best ways to learn), we noticed the same thing: the quality of what we were getting out of AI — both in terms of its outputs as well as our experience of feeling ‘augmented’ by it — had less to do with the prompts themselves and more to do with decisions we were making around the prompts. Before we opened the tool. During the conversation. After we closed it.

Those invisible moments turned out to be where a lot of important thinking takes place, but it’s a part that a lot of AI conversations and trainings skip over. What is the thinking that takes place at those stages, and how might we focus on our thinking at those stages in order to more effectively utilize AI in ways that augment our thinking?

A simple framework: Approach, Engage, Output

We’ve started paying attention to three distinct moments in any AI interaction. They aren’t steps in a linear process. They’re moments of choice — and by noticing the opportunities we have at each one, we can more effectively engage with AI as a tool that helps us deepen our own thinking, and which augments our work.

Approach is everything that happens before you start typing. It’s how you’re approaching your AI use/task. Why are you reaching for AI right now? Which tool? What context are you bringing in, and what are you deliberately leaving out? Are you trying to think something through, or trying to produce something? Those are very different starting points, and they shape everything that follows. Building your awareness of what you’re trying to do with the help of AI is a critical step for setting you up to notice whether your interaction is getting you where you need to go.

Engage is the back-and-forth itself. This is what you’re actually doing with your AI tool. How you prompt, how you question, how much you hand over versus how much you hold onto, what role you’re asking the AI to play versus what role you keep for yourself. Where you circle back to check or challenge your own bias - or that of the AI. This is where most AI advice lives (better prompts! chain of thought! give it a persona!), and while it matters, it’s only part of the picture.

Output is what you do with what comes back from the AI. It’s everything that happens next, after you’ve engaged with the AI. How you interpret, check, adapt, and share it. What you notice about your own thinking in the process. Where you draw lines. How you reflect, and how you share your learning with others.

Recognizing these three distinct moments came out of a learning community we’re both part of that explores AI and organizational learning (grounded in a practice called Emergent Learning). We noticed the different ways we made choices along the way, while trying to articulate how we were using AI in our work - and discovering that naming and noticing these moments more explicitly helped make us much more intentional about the possibility (and peril) that accompany each one.

Let’s explore each one in depth.

What “approach” looks like in practice

The approach stage is the one that’s easiest to skip, yet it’s also the one most likely to cause some disappointment in early experiments with AI. You have a task, you open a chat, you start typing. But the choices you make (or don’t make) before that first prompt can shape everything.

Being intentional about the “approach” stage means getting clear on the task you actually want to do, understanding if the tool you’ve chosen is the best one for that task, considering (and finding) the data or context you’ll need to give it, and reflecting on what you’re actually expecting the AI to handle. In other words, how do you want to think with and relate to AI for this task?

This can be a great place to do a simple before action review (BAR). Pausing to ask: What are our goals for using AI? How will we know if we’ve achieved them? What have we learned from prior uses? What challenges might we encounter?

This can work well for individuals wanting to maintain awareness of their own thinking; but it can also be incredibly useful as an alignment exercise for teams or departments that are considering how AI might handle a specific task or workflow. Chances are, in answering these questions, you’ll realize that not everyone is aligned on the actual goals for the task and how best to approach utilizing AI. And even if you are aligned, the work of clarifying the goals sets you up well for decisions about layering in additional AI use (such as collaborative projects, custom GPTs or agents) that you might evolve into.

We’ve both been experimenting with context-rich spaces (projects, custom GPTs, Gems, depending on your platform), which are fundamentally different from one-off prompting. Instead of dropping a question into a blank chat, you create a tailored space with background information, goals, and instructions preloaded so the AI responds with more specificity. Our different goals have shaped how we approach AI, and resulted in different types of augmentation to our thinking.

Kelci’s approach: building elaborate context, iterating over time.

I (Kelci) have about 10 custom GPTs now for different purposes: a project-specific strategy space, a copywriting helper, an Instagram caption assistant (for my art). For a complex strategy project, I uploaded my goals, working hypotheses, assumptions about the system, and descriptions of key stakeholders. Now I can jump straight into higher-order questions (”Given the goals and constraints we’ve discussed, what are three different strategy paths that reflect three different worldviews?”) and get output that is recognizably about that project, not generic. In all these cases, I’m looking for a very specific output, and setting up a GPT to help me get there.

Here’s the part that made this easier: I asked ChatGPT to help me design my GPTs. I told ChatGPT, “I want to create a custom GPT that will help me with X, Y, and Z. Help me generate the instructions and knowledge files I should use.” In some cases just my description was enough - but for more in-depth project context I could also provide additional files that contained much more information for the AI to use in building my GPTs. ChatGPT would write instructions and these knowledge files for me, and once I’d reviewed them to make sure everything was correct, I could pop the instruction into a project or Custom GPT. I find that maintaining these spaces is a bit like tending a garden: pulling weeds (phrases the AI fixates on and keeps resurfacing even when I don’t want them, changing “dos” or “don’ts” so it’s less likely to output things in ways don’t want), adjusting the watering (removing formats or structures that started to feel overly constraining), and slowly shaping the behavior over time (e.g., by giving it back a rewritten document and asking it to compare its output to mine, and to tell me how to tweak the instructions to get closer to my version).

Valerie’s approach: starting with no instructions at all.

While I (Valerie) have set up some project spaces similarly to Kelci, one of my first experimental uses was to set up a project space in Claude to steward a community of practice over 15 months. My deliberate choice was no instructions, only files. I set this up at the start of the journey with the goal of simply keeping the information in one place and seeing what uses might emerge. As one person with an unpredictable memory trying to maintain continuity across monthly meetings, I just needed AI to hold information. As time progressed and we had more meetings, I provided that as fodder in the context. I gave it an overview document about our learning framework, notes from the conversation that led to the group’s formation, pre-group survey data, and then after each session, the transcript and chat from the meeting. Over the first year, it grew to 26 documents. No instructions, ever, until just recently when I started considering adding light ones. The goal was to be a container from which I could extract insights as the group evolved.

The space became a kind of connective tissue I could query in different ways. I could ask for summaries, cross-links to previous calls, or use it to help find examples showing how a question had evolved over time. I used it to plan the agenda for a session by looking across prior meetings to identify what questions kept returning. I was able to use it to help me, as the facilitator, take a step back and see the group more holistically and from points of view outside of my own. This was a relatively simple use that helped me be a better steward of this group.

What we both learned: We share these examples to show how we made very different choices about how much structure to bring before ever typing a prompt. Kelci’s example shows how to create more context and constraints through files and instructions, in order to achieve very specific types of outputs. Valerie’s example shows how withholding instructions can let the context serve more as a repository.

The key here is that neither approach is the “right” one. But both were intentionally chosen, and that’s the point. The approach stage is where you clarify what you actually need AI to help you do (and what you don’t). When you skip noticing the constraints and context you’ve set up (or what you haven’t), you’re still making choices - you’re just making these choices without making visible how they’re impacting what the AI will produce.

What “engage” can actually be

The engagement stage is where most people’s attention around AI capabilities often lives. We’re awash in a sea of prompt guides and templates, use case libraries, copy-and-paste workflows. All of these encourage immediate engagement, in part because so many of us know that the best way to learn about AI (both its risks and capabilities) is to experiment and learn. So reducing the friction for that can be helpful. But there’s a wide range between “type a prompt and accept what comes back” and what’s actually possible when you’re intentional about how you engage.

Kelci’s engagement practice: strategic sparring

I (Kelci) use AI a lot for what I think of deepening and sparring with my own thinking. For instance, I might ask AI to compare thinkers who have fundamentally different worldviews on a question I’m wrestling with (say, adrienne maree brown and Richard Rumelt on what makes a good strategy in complex adaptive systems). Then I push further: What critiques exist of these views? What perspectives are missing? What worldviews are dominant in what you just gave me, and what are alternative ways of seeing this? I find that this kind of exploration really helps me see more broadly, recognize the perspectives dominant in my own thinking, and explore what other ways of thinking might be more generative. [See this excellent piece by Jewlya Lynn on using AI to help with your own biases.]

I also use a lot of roleplay with AI: “You’re a program officer working on food security. If you were drawing on all of these strategy thinkers, what would three different strategies look like that exemplify three different worldviews?” AI provides us with an astonishing ability to generate, compare, and contrast different ways of thinking and working in a far shorter time than I’ve ever experienced. I am constantly exploring possible directions and the implications of that, to sharpen my thinking about how to move forward.

Since AI hallucinations tend to be foremost on people’s minds - it’s useful to note here the issue of accuracy. For this kind of exploratory sparring, factual accuracy matters much less than having a rich set of scenarios to test your thinking against. It’s similar to scenario planning or futuring, where the point isn’t to predict the future correctly but to expand your range of readiness and strengthen your ability to respond to different situations. It certainly helps to have enough grounding in a topic to recognize when something feels wildly off (though honestly this has happened much less than I expected). But engaging with AI in this way isn’t about fact-finding - it’s about pressure testing and exploring the contours and consequences of our own thinking.

Valerie’s engagement practice: being interviewed by AI

One of my (Valerie) favorite ways to engage with AI in a way that expands my own thinking is to flip the dynamic and ask AI to interview me, especially when I’m feeling stuck or overwhelmed by the directions I could go in. I’ve realized that engaging with some questions will help me get my thinking going. Sometimes, I just ask AI to do this to get started with a task. But for more focused sessions, I built a project space where the instructions say something like: if I come with a half-baked idea, ask open-ended questions to “spark and stretch” my thinking. If I come with a detailed outline or draft, critique it — look for missing perspectives, weak arguments, and fuzzy language. [I was originally inspired to set this project space up by this wonderful piece from Alexandra Samuel.]

I answer by typing, by handwriting and transcribing later, or by just talking into the mic. Much like ‘free writing’ as a technique for learning, I’ve seen how ‘free responding’ gives me the space to talk out ideas and reach new insights. The key is that I’m doing the thinking. AI is shaping the sequence and depth of the questions in ways I probably wouldn’t get to on my own. The questions are pushing on my thinking and, as long as I engage with them authentically, they enable me to connect dots for myself. This interviewing pattern is actually how I drafted a case study recently: sitting in my car for an hour, talking through reflections into an AI that kept me moving, and then later turning that transcript into structured content. I had a number of ‘aha’ moments, much like the kind that emerge from free writing, but in a way that was accessible and manageable for me in the moment.

What we both noticed: In both cases, we’re using AI to make our own thinking visible to ourselves. Not to produce a finished product. Not even to get a “good” answer. The engagement itself becomes a way to surface what we already know (or don’t) and push past our default patterns or thinking or acting. That’s a fundamentally different relationship with the tool than engaging only to ask things like “give me a more concise draft of this email.” While there can be a time and place for such quick engagements, if the goal is to learn and deepen our thinking, we’ve found that there are a variety of fairly simple ways to do this.

Output: The moment we often skip

This last component is the part that tends to get collapsed into simply determining if “it gave me something useful” or not and then forgotten. But the output stage is actually where more learning happens, if you slow down enough to notice. Pausing to reflect critically on the outputs, determining what was and wasn’t achieved, identifying improvements, and documenting this learning to improve future prompts/projects (or for a do-over of your current project) are all ways to build your learning while improving the effectiveness of the tool.

When you look at what AI gave you, can you identify whose perspective is centered in the output? Whose is missing? What did you accept without questioning, and why? What would you change if you were writing it from scratch? (That gap between AI’s version and yours is information worth paying attention to.) Are you using the output to start your thinking, or to replace it? [See this post by Hilary Gridley on how to use AI for writing if you hate AI.]

Pausing to reflect on the output is especially important because AI tools often encourage us to move quickly on to the next step by offering up follow-up tasks they can perform. Ultimately, AI tools are designed to maximize engagement, much like social media. The pattern — “Would you like me to turn that into an image? A PDF? A lesson plan?” — is the new infinite scroll. The output stage is where those dynamics become most visible, and where you have the most agency to push back. [If you’re curious about that thread, this piece goes deeper into what Valerie has described as cognitive protection.]

When we see the output as an artifact of an interaction — one in which we are in relationship and engaging with the AI, and in which both our agency and its assumptions will determine the usefulness, accuracy, and effectiveness of the task — then we give ourselves the ability to step back and think critically about what the interaction produced, what was useful, and what wasn’t. This may feel like a speed bump, but it’s actually an opportunity to shore up our own thinking and perspective.

The questions above aren’t a checklist. They’re more like a practice. The more you ask them, the more you start noticing your own patterns: where you over-trust, where you disengage too quickly, what you never think to ask. That noticing is the learning. Shifting your behaviors and shaping your engagement with AI is putting that learning into action.

Why this matters beyond productivity

We wanted to explore this topic because there is a lot of very generative (ha!) discussion about how to augment our thinking with AI, but it can be challenging to really see what that looks like in practice. As we articulated our approach-engage-output moments, we realized that reflecting on each of these stages offers a moment for thinking about our intentions and expectations regarding our AI use.

Augmentation, after all, is about getting better thinking from yourself.

When you pay attention to all three moments, you start seeing things you might have skipped over before. Your own defaults. The places where AI’s confident, well-written response makes you less critical rather than more. The questions you never think to ask until a sparring session surfaces them. The difference is between using AI to think with you, and using AI to avoid thinking.

So here’s a small experiment, if you’re up for it: pick one AI interaction this week and try to notice all three stages. Before you open the tool, pause and ask yourself what you’re actually trying to accomplish and what choices you’re making about the context and constraints you give the AI (approach). During engagement, notice what you’re handing over and what you’re holding onto — how are you using the AI to challenge and deepen your own thinking (engage)? After you get the output, sit with it for a moment before you use it or discard it — ask yourself what impact it’s had on your thinking (output). You can do this on your own but, we promise, it’s much better to discuss it with a friend!

We’d love to hear what you notice. What shifted? What surprised you? Where did you catch yourself on autopilot, and how did you find your way out?

This post was co-authored by Valerie and Kelci.

Valerie Ehrlich is the founder of Mission Bloom, where she helps mission-driven organizations approach AI with responsibility and intention. Kelci Price is the founder of Kelci Price Consulting LLC, focused on strategic learning and evidence practices for foundations. They are both part of the Emergent Learning community and have been collaborating on the intersection of AI and organizational learning for the past two years.

Sharing is caring, especially when it comes to competing in the algorithms! If you liked this post, please consider sending it to someone else (and then have a conversation about it!)

💡Did you know?🌱 Helping mission-driven leaders, teams, and organizations thoughtfully and responsibly adopt AI is what I do! If you want to learn more, please reach out!

To stay up-to-date on my writings across platforms and access additional guides and resources, please join my occasional mailing list (no spam, ever!).

Valerie, Kelci, this is such a great piece ❤️

AI tools are designed to immediately offer the next thing, I hate this one the most: "want me to turn this into a thread? a presentation? a PDF?" 🤣 and if you don't pause you just keep going.

I've started building deliberate friction steps into my own workflows, like before I use anything AI gives me, I have to write one sentence about what I think is missing. Forces me to actually look at it instead of just shipping it :) Works embarrassingly well for something that takes ten seconds.

Thank you for sharing this!